Full screen triangle optimization

February 27th, 2023

So here’s a well-known optimization trick that also tells you a bit about how GPUs work.

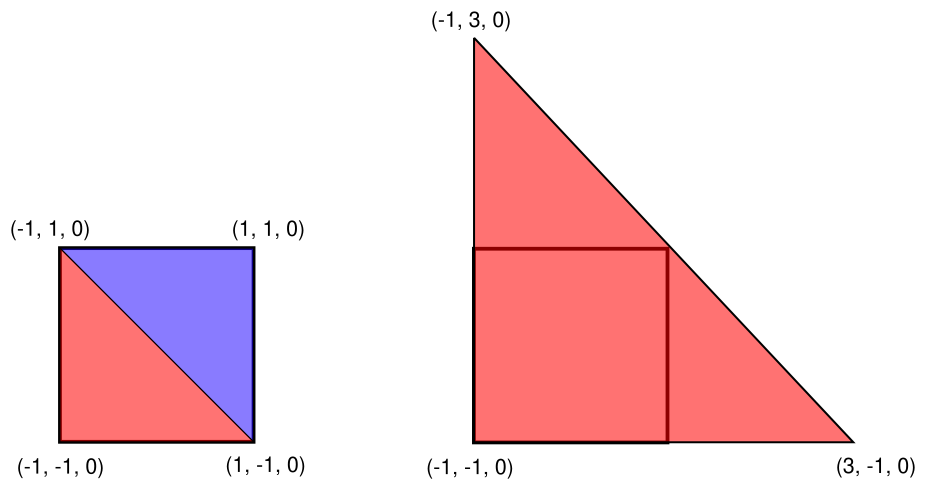

Full screen post processing effects are usually drawn as a pair of two big triangles that cover the whole screen. A fragment shader then processes every pixel. But you can make it a tiny bit faster by drawing a single large triangle instead:

Setting up the triangle is convenient to do directly in a vertex shader with no vertex buffer bound.

Call glDrawArrays(GL_TRIANGLES, 0, 3) with this vertex shader:

void main()

{

vec2 coords[] = {

vec2(-1.0f, -1.0f),

vec2(3.0f, -1.0f),

vec2(-1.0f, 3.0f),

};

gl_Position = vec4(coords[gl_VertexID], 0.0f, 1.f);

}There are three reasons why the single triangle approach is faster.

- The GPU executes fragment shaders in lockstep 2x2 pixels at a time in order to support automatic mip level selection. This ends up making your shader run twice on some pixels where the two triangles meet. This is mandated by the Direct3D and OpenGL specifications.

- In actual hardware shading is done 32 or 64 pixels at a time, not four. The problem above just got worse.

- Covering the full screen with a single triangle can make your shader’s memory access patterns linear. See the “case study” section in this AMD performance tuning guide for an example of this and the second point.

In my microbenchmark1 the single triangle approach was 0.2% faster than two. We are definitely deep into micro-optimization territory here :) I suppose if you read textures in your shader you’ll see larger gains like happened in the AMD case study linked above.

For more details on the mechanics of the automatic mip selection, see the section “Hardware Partial Derivatives” in this 2021 article by John Hable.

Of course you could use compute shaders here but you need to make sure the memory access patterns play well with whatever format your textures (and the framebuffer?) are stored in. So it’s not an obvious win if you take code complexity into account.

Finally, if you still end up drawing two triangles, make sure you’re drawing a four vertex triangle strip and not a six vertex list. I’m not claiming there’s a speed difference – this is only for the nerd cred!

For more tricks like this in general, see Bill Bilodeau’s 2014 GDC talk on the subject (slides).

Thanks to mankeli for feedback on a draft of this post.